PRODUCT SERIES

产品系列

我们的产品多种多样,涉及面广泛

QUALITY DETAILS

品质细节

追求细节的完美,让您感受到我们满满的诚意

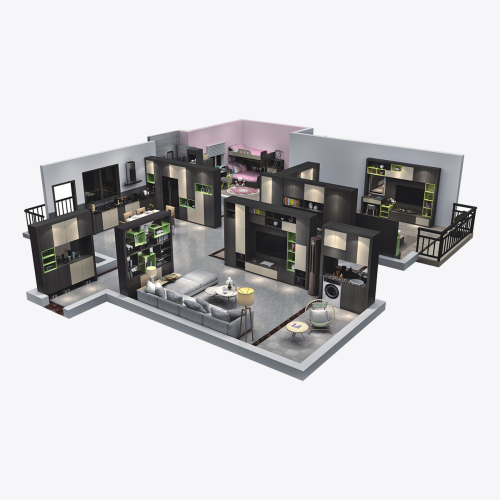

ONE-STOP WHOLE HOUSE CUSTOMIZATION

一站式188金宝搏怎么注册

省力更省心,精选属于您的家

-

恒星整木为您量身定制

恒星整木为您量身定制 -

720imax全景设计

720imax全景设计 -

沉浸式VR新体验

沉浸式VR新体验 -

恒星188金宝搏怎么注册 任君选择

恒星188金宝搏怎么注册 任君选择 -

确保精选优质原材料

确保精选优质原材料

HOT PRODUCTS

热销产品

用心缔造属于您的特色小屋

产品世界

WHOLE HOUSE CUSTOMIZATION

一站式188金宝搏怎么注册

,省力更省心

精良工艺

SOPHISTICATED CRAFT

选择恒星,就是选择让您放心的品质

加盟中心

* 对经销商五项要求 *

* 七大支持政策 *

* 完整经销招商流程 *

* 加盟、经销优势 *

* 投资保障 *

188bet棋牌

依品牌和实力支持,成熟的销售服务网络和成功的市场运作管理经验,致力于开拓门业领域,规范其标准,提升服务质量和形象,塑造国内同行业一流品牌形象,满足消费潜在的服务需求,

同时与经销商携手共创双赢之局面,恒星门业热诚欢迎您的加盟!

- 1公司简介

- 2企业文化

- 3技术实力

-

公司简介

YONGKANG HENGXING VISION WOOD PRODUCTS CO.,LTD. 188bet棋牌查看更多188bet金宝搏备用网站 成立于2006年,公司座落于盛名五洲有着“中国门都”、“中国五金之都”之美称的浙江永康,公司曾荣获“中国驰名商标”、“全国木门30强企业”的荣誉称号,是从事188金宝搏怎么注册 招商、188金宝搏怎么注册 加盟、护墙板定制、轻奢木门、轻奢护墙板研发、设计、生产、销售与服务为一体的实木复合门厂家、实木门厂家、护墙板厂家、新型集团企业,公司专业生产:别墅三防大门、铜门、仿真铜门、原木门、实木复合门、烤漆生态木门等。产品遍布浙江永康、温州、江苏、福建、江西、安徽、广东等地区。

-

企业文化

YONGKANG HENGXING VISION WOOD PRODUCTS CO.,LTD. 188bet棋牌查看更多公司曾荣获“中国驰名商标”、“全国木门30强企业”的荣誉称号,是集研发、设计、生产、销售与服务为一体的新型集团企业,公司经多年的发展壮大,已经形成了规模化、科学化、现代化的集团企业。

-

技术实力

YONGKANG HENGXING VISION WOOD PRODUCTS CO.,LTD. 188bet棋牌查看更多公司经多年的发展壮大,已经形成了规模化、科学化、现代化的集团企业。公司有木质门厂区占地面积8000多平方,钢质门厂区占地面积26000多平方的现代化厂方和先进的生产流水线。

所有产品均经过CAD设计,现代化单层钢板门流水线运作,先进的生产检测设备检测。成功利用先进管理技术及高档设备,结合各门业产业优势,经过几年的开拓进取,本着以市场为向导,以科技为先导,以人才为载体的理念,迅速发展为一个现代化集团企业。

HOME IMPROVEMENT STRATEGY

家装攻略

来看看超多的家装攻略,让您的家更加美丽